Website failure under load? This is why

You’ve used your budget on social media, ads, and preparations for what should be the year’s biggest campaign just to see your website buckle under the stress of thousands of simultaneous users. They try to access your site but are instead met with lengthy lag times and dreadful HTTP error codes.

Your worst nightmare came true. Your website went down. But what went wrong?

10+ years of experience developing and maintaining microservices at Queue-it—a SaaS application that handles millions of users every day—has shown me that it’s normally not just a single flaw.

That said, here are my six hunches:

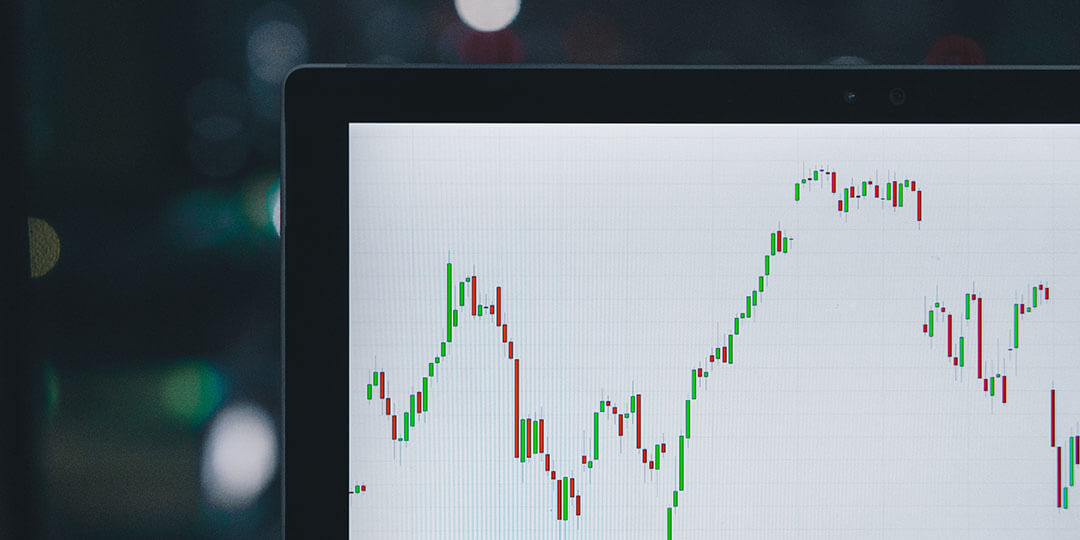

Even if monitoring doesn’t make your application scalable, it’s still a foundational part of your defense against website failure.

You’re already behind if you don’t have insight into your application’s metrics, you aren’t alerted to failures, or you don’t have easy access to your log. Your ability to react swiftly to a failure is considerably weakened.

Also after the event, data is all-important to conduct a root cause analysis based on facts, not guesswork. An accurate understanding of the root failure will allow you to effectively optimize your system for the future.

If your application is a black box, there’s good news: There are a slew of log management and application performance management (APM) SaaS solutions that can help you and which are easy to get up and running (Datadog, New Relic, Loggly, and Splunk just to name a few).

RELATED: How To Prevent Website Crashes in 10 Simple Steps

Typically, an essential feature of your website is the ability to show data such as inventory status, updated in real-time. Odds are this is done by querying your database. It might seem like a sound idea from a business point of view. But it’s actually a terrible idea if you’re trying to create a scalable system that you can afford.

Caching is one of the most effective and least expensive ways to improve scalability and performance. It can be implemented on your infrastructure, in your application, or at the data level. Each has its advantages, but the infrastructure level is likely where you’ll see the greatest rewards for the least amount of effort.

If you don’t have a Content Delivery Network (CDN) in front of your site, it’s a clear next step. If you already have a CDN in place, there’s a good chance you’re not getting the most out of it.

Originally, CDNs served static content like pictures. But today they do much more, from caching of dynamic content to routing to DDoS protection. Some even offer to execute code. Taken together, this means that it’s possible to offload increasingly more of your website onto CDN providers’ infrastructure, resulting in lower strain on your servers.

RELATED: How to Fix a Website Crash: 6 Steps to Restore & Safeguard Your Site

For decades we’ve developed applications primarily based on relational databases. Steep prices for data storage and software licenses have led us to misuse the relational database to save images or temporary data like session state. A relational database is a powerful tool. But it’s only one of many. To achieve a scalable system, you need to use many tools, not just one.

At the same time, we’ve been told that data must be normalized down to an atomic level, disregarding the fact that the data need to be combined again before it can be useful. The result is complex SQL queries that slowly kill database performance.

There are many solutions, including document-, time series-, memory-, or graph-databases, message streams, or even a pattern like Command Query Responsibility Segregation (CQRS) that generates optimized read models.

Each use case has its own specifics and requirements, and different tools are needed to attain scalability.

We’ve traditionally modeled our digital applications around the processes and transactions that exist in the physical world. For instance, an ecommerce site will typically process a synchronous authorization on a credit card used in a sale in the same way as your local corner store.

Problem is, there’s now a strong dependency between the website and the payment processor, so the website’s performance is now limited by the payment processor’s scalability and uptime. In a scalable system, services are autonomous and utilize asynchronous workflows in an eventually consistent architecture.

If you’ve bought something on Amazon, you may have received an email explaining that your payment didn’t go through and asking you to re-enter your credit card information. This happens because the payment is only processed after the order goes through and the shopper received his or her order confirmation.

The system can then subsequently process the transactions at a speed the payment gateway can handle. As a result, the website can process orders in amounts that at times are far higher than the payment gateway’s capacity.

The vast majority of transactions we implement in our applications aren’t necessary. But in most cases, converting those we need into asynchronous, scalable processes requires changes to business workflows.

There will be limits in your application, regardless of infrastructure. A scalable application should, however, be able to leverage its elasticity to increase capacity. Ideally, a system will scale horizontally by adding more servers to a cluster. So, when the number of servers doubles, the capacity doubles. The advantage is that the system—automatically or manually—can easily adjust capacity based on current demand.

Commonly, though, many applications scale vertically by replacing the server with a larger or smaller one. This often requires lots of resources and some period of downtime. Scalability becomes more and more costly in a vertical model due to administrative, hardware, and licensing costs. Yet developing a horizontally scalable system is neither easy nor cheap.

An alternative or complementary approach is to downgrade the user experience as the system runs out of resources. An advanced database CPU-heavy search function can for instance be replaced with a simple search function in order to free up the database’s CPU for other purposes.

As another option, you can choose to give access to a certain share of users straight away, and redirect excess users to a virtual waiting room or online queue that doesn’t strain your system.

RELATED: Autoscaling Explained: Why Scaling Your Site is So Hard

Even with superb technical architecture, brilliant developers, and exceptional infrastructure, your application will still have limitations. You doubtless have some type of distributed system, and network, latency, multithreading, and the like introduce a series of new sources of error that will restrict the scalability of your application.

It is essential that you’re aware of these limitations and prepare for them before the error happens in production. Systematically load testing your application is the best way to accomplish this. Initially, you’ll likely experience that each load test will expose yet another limitation. That’s why it’s critical that you set aside enough time for load testing and that you run multiple iterations to identify and fix performance limitations.

Remember too that new code can potentially introduce new limitations, so it’s important to periodically run your load tests.

If you’re interested in how you can easily and cheaply get started with load testing, check out my post on running less painful, almost free, web load testing, or check out Queue-it's complete guide to load testing.

Written by: Martin Larsen, Director of Product, MScIT