The 2026 FIFA World Cup runs from June 11 to July 19. Forty-eight teams. One hundred and four matches. And for streaming platforms, 104 separate moments where everything can go wrong.

The whistle blows. Millions of fans around the globe lean in. In that split second, your streaming service isn’t just an app—it’s the stadium.

Every major live sporting event produces the same result: a wave of traffic that arrives faster than infrastructure can respond. For the 2026 FIFA World Cup, with its 48 teams, 104 matches, running from June 11 to July 19, streaming platforms will face that wave not once, but repeatedly, across six weeks.

The question isn't whether surges will happen. It's how big they’ll be, and whether your platform will still be standing when they arrive.

Streaming platforms’ video delivery layers are built to handle large audiences. But when traffic to streaming services surges suddenly, it rarely hits the delivery layer first. The failure point is usually the front door: the systems that manage sign‑in, accounts, and session setup, along with the shared backend services that sit behind them.

Picture the opening match of the World Cup. Kick-off is at 18:00. At 17:58, a TV promo airs across three national networks. In 90 seconds, login requests go from 1,000 per minute to 100,000. The load balancer holds, but the authentication service doesn't. Connection pools exhaust. Queries back up. Response times climb from 200ms to 8 seconds. Users get 504 errors.

What follows isn't just a technical incident:

- Fans are frustrated: Error messages and failed logins mean paying customers can't access content they've already paid for, at the exact moment they wanted it most.

- They don't wait around: The match won't pause for your systems to recover. Fans find another way—a rival service, or an illegally hosted stream—and some never come back to your platform.

- Support queues fill within minutes: Tens of thousands of support tickets before half-time, with no fast path to resolution while the match is live.

- Social media moves faster than your comms team: A trending hashtag during kick-off is visible to the same audience your rights deal was meant to reach.

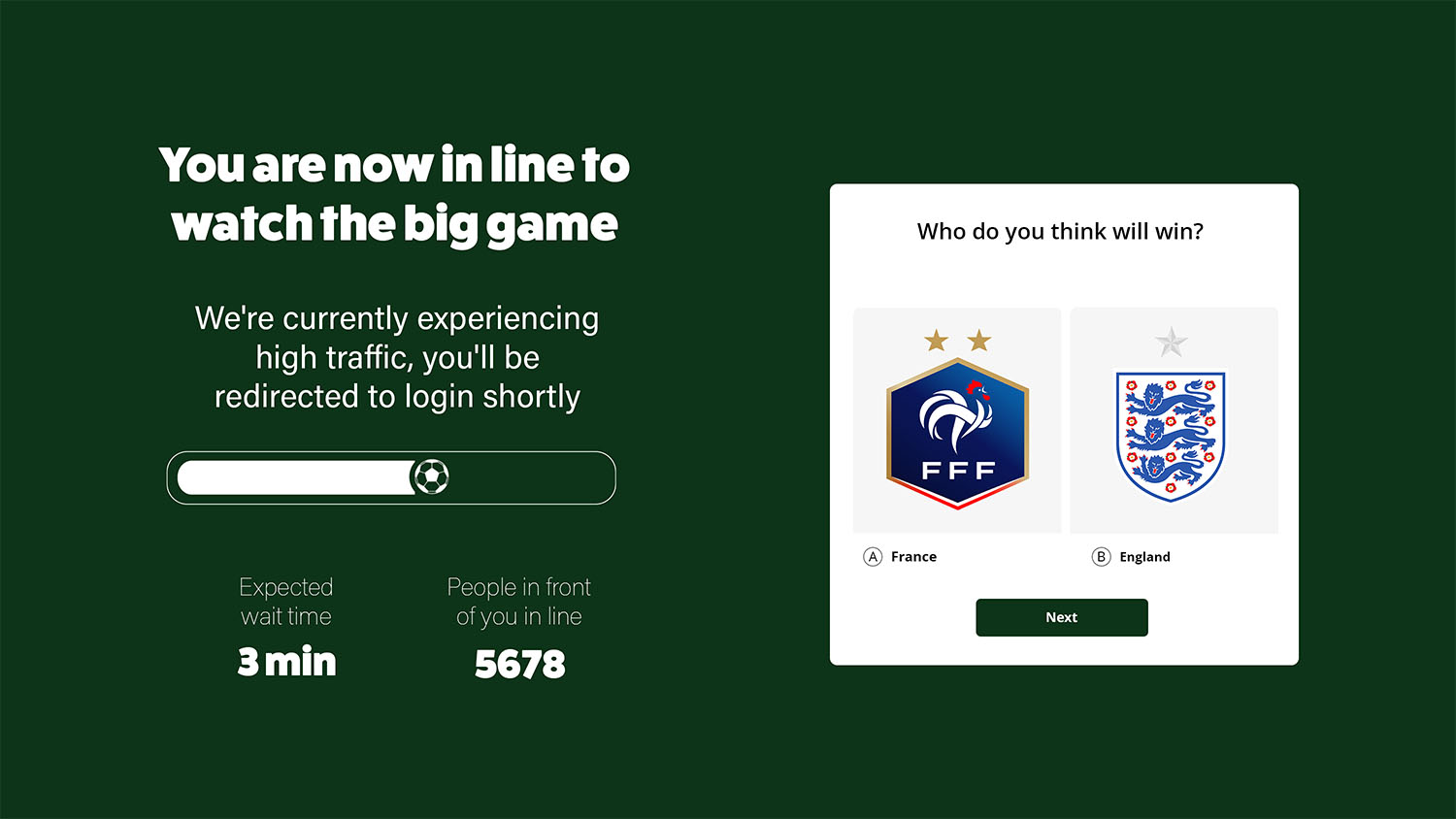

A virtual waiting room prevents this chaos by controlling the rate at which users enter the authentication flow. Rather than allowing 100,000 login attempts to hit your systems in 60 seconds, it holds excess traffic in a fair, ordered queue and releases visitors to login at the rate your infrastructure can reliably handle.

Some visitors experience a short wait during the biggest login surges, but 87% of consumers say they prefer a short wait to a website that works than immediate access to a slow or buggy website. With a virtual waiting room, all of them get in. None of them see an error page or timeout.

For streaming platforms, a virtual waiting room protects more than login. During a World Cup surge, your account infrastructure is simultaneously handling:

- New sign-ups from first-time subscribers rushing to activate before kick-off

- Password resets from returning users who haven't logged in since the last major tournament

- Subscription upgrades from viewers who realise they need a premium tier minutes before the match starts

Each flow touches different backend systems. Each carries its own failure mode. A virtual waiting room can protect the entire account journey, not just the login endpoint.

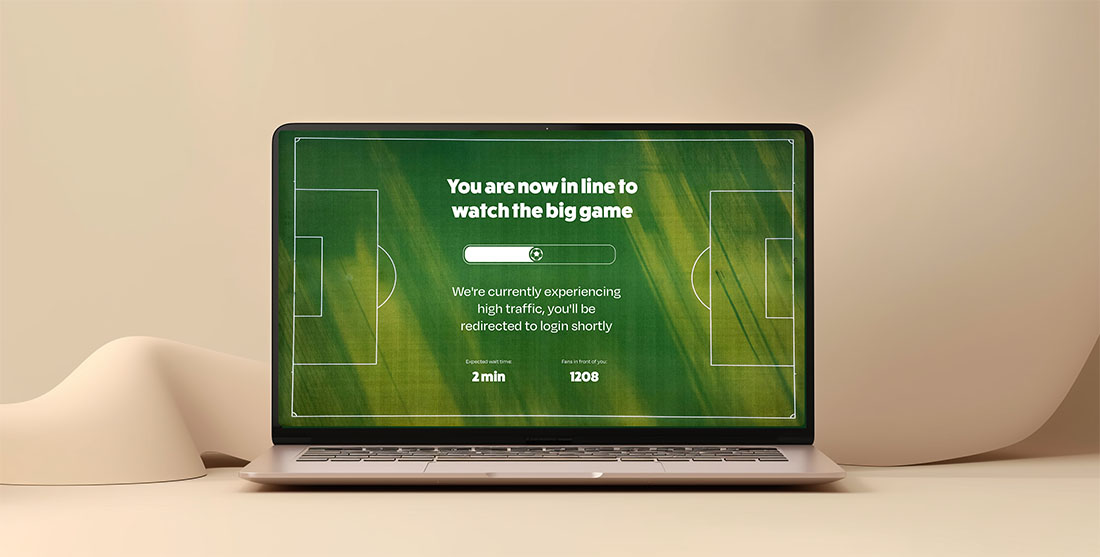

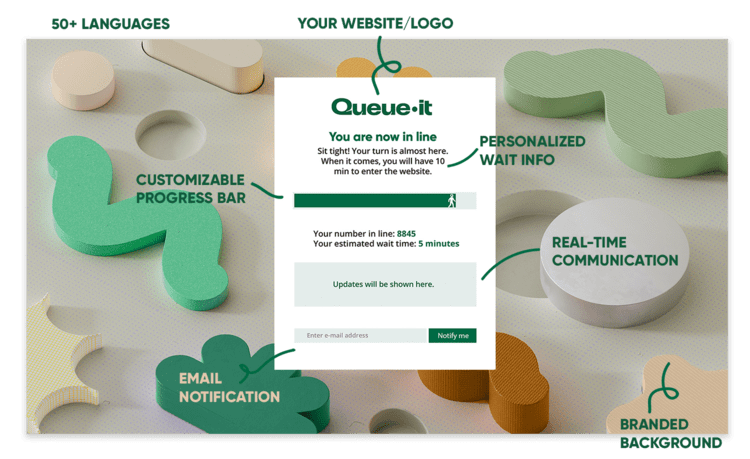

When traffic to a protected page exceeds a defined threshold, visitors are automatically redirected to a branded waiting room. They see their position in the queue, an estimated wait time, and a progress bar. Once capacity allows, they're released back to the site in the order they arrived.

Critically, visitors in the waiting room are hosted on Queue-it's infrastructure, not yours. Your system carries only the users actively moving through it, not the full weight of everyone who arrived at once.

The always-on configuration matters here. A scaling or queue system that requires manual activation is a liability during a fast-moving spike. By the time an engineer notices the anomaly and enables the queue, the database is already under pressure.

Queue-it's 24/7 Peak Protection removes that dependency: traffic exceeds the ceiling, the queue activates instantly, traffic subsides, the queue deactivates. No human intervention required.

RELATED: Handle Unexpected Traffic Spikes with Confidence, No Matter The Demand

Scaling is necessary, but it doesn't solve the problem on its own.

For timed events like a match start, pre-scaling is the smart approach. Provision additional capacity before the match kicks off, eliminate cold-start delay, and avoid relying on autoscaling to detect and respond in real time. But pre-scaling requires accurate demand estimates, and during a World Cup those estimates are difficult to make. The consequences of getting it wrong run in both directions:

- Over-provision and you've absorbed significant infrastructure cost for headroom you may not need

- Under-provision and you're back to the same overload problem, with a slightly larger buffer before it hits

There's also a failure mode that neither approach reliably resolves. Even when application servers are ready, the database rarely scales with them.

A sudden surge of authenticated sessions creates a connection pool problem: more concurrent application instances means more concurrent database connections, more lock contention, more queued queries. Scaling the application tier without protecting what sits behind it can accelerate a crash rather than prevent one.

A virtual waiting room complements your scaling strategy by controlling what scaling alone can't: the rate of traffic entering the system in the first place.

The result is not only saving your customer experience, but also saving on scaling and infrastructure costs:

- On average, Queue-it customers report a 38% decrease in server scaling costs

- On average, Queue-it customers report a 33% decrease in database scaling costs

- 80% of customers say Queue-it helped them avoid a major system overhaul

East Asia: absorbing the TV-to-digital surge

A major broadcaster in East Asia runs Queue-it as a permanent layer on their on-demand platform. During live events, they regularly see 300,000 or more concurrent viewers arrive driven by TV broadcast promotion—the steepest and least predictable kind of spike.

By automating queue activation through traffic thresholds and APIs, their engineering team no longer treats these moments as incidents requiring manual response. The traffic arrives, the queue absorbs the excess, and the platform remains stable.

Europe: protecting high-intent subscription moments

A leading European sports streaming platform uses Queue-it specifically on their sign-up and subscription upgrade pages during major rights launches.

These are high-intent moments: fans are motivated, payment intent is high, and an error at checkout kills a subscription that is unlikely to be attempted again. A controlled entry flow protects both the infrastructure and the conversion rate simultaneously.

The World Cup compounds the challenge in a way most live events don't. It isn't a single spike to plan for and recover from. It's 104 matches across six weeks, each with its own audience profile, time zone distribution, and traffic shape. A platform that survives the opening match still has to handle a quarter-final in week five.

Managing that sustained pressure doesn't require a complete infrastructure overhaul. It requires accepting that demand will exceed capacity during certain windows, and building a mechanism to handle that gracefully—rather than hoping pre-scaling estimates hold or that autoscaling closes the gap in time.

The fans will arrive. The question is whether your front door is ready for them.